Delayed reaching retinotopy in EEG

This project was in collaboration with Scott Stone, and was supervised by Anthony Singhal.

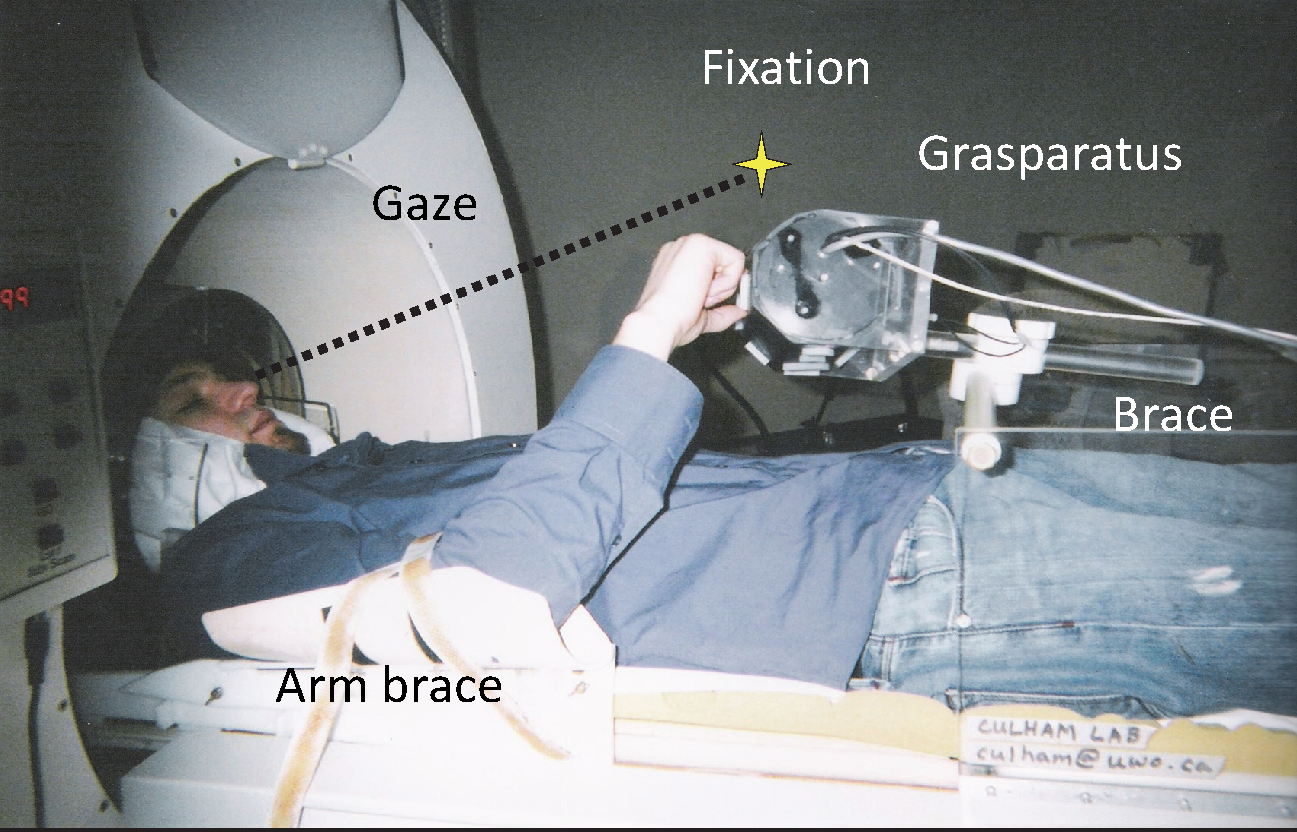

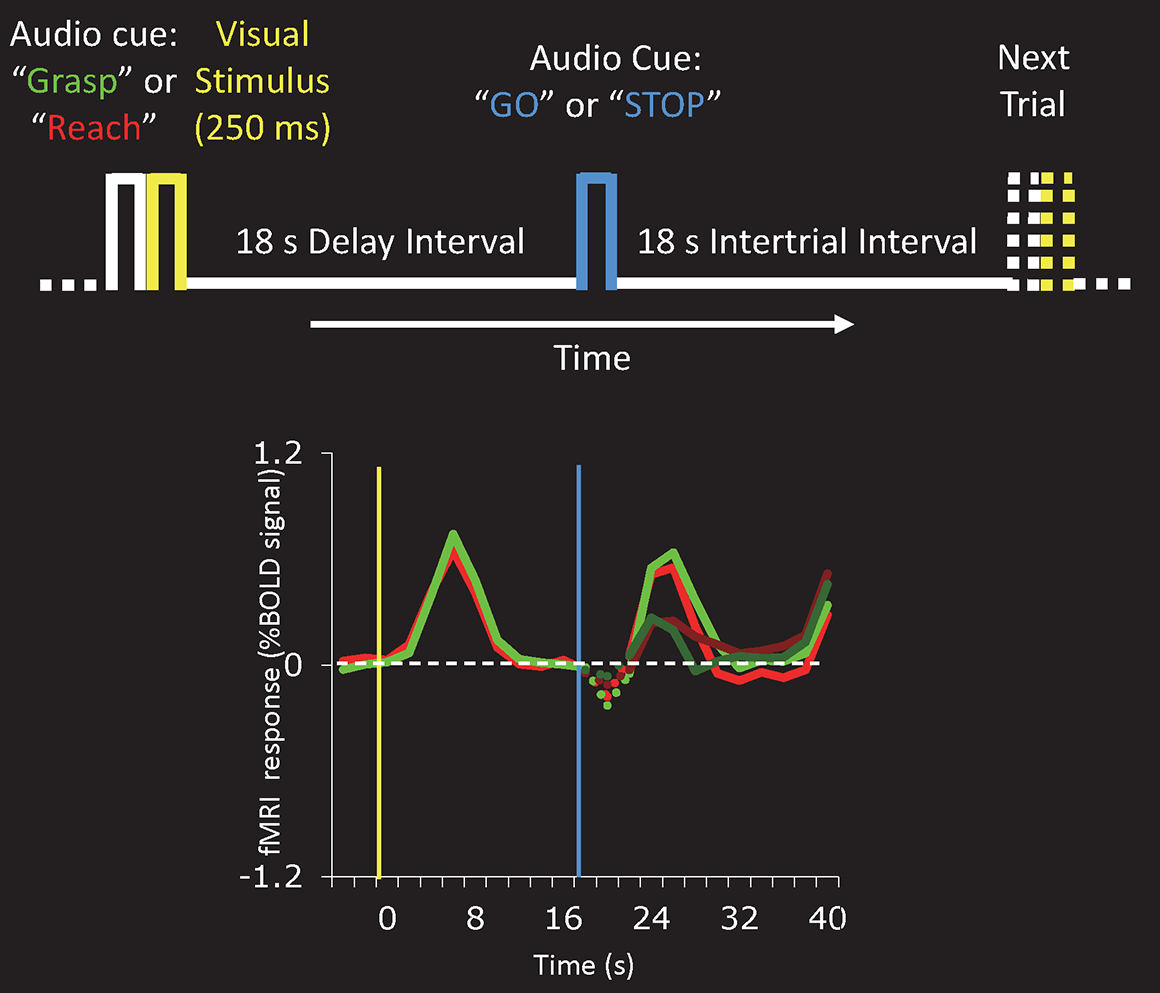

In a 2013 paper, Anthony and colleagues demonstrated that delayed action re-recruits visual perception. Participants in a MRI scanner briefly saw an object before the lights were turned off. After 18 seconds in the dark, a sound would tell the participants to reach and grab the object they had seen.

What do you think the brain is doing during those 18 seconds?

One idea might be that there is an increase in activity where the object is represented in the brain, and that this activity continues until you need to use this representation for action.

What they actually found is that early visual areas of the brain are briefly active when the lights are on and you can see the object, but then come back down to baseline when the lights are turned off. However, when cued to reach and grab the object, these same areas become active again, even when you cannot see anything.

You can see this pattern in the figure below. There is an increase in activity when the lights are on (at 0 seconds), a decrease while you are waiting in the dark, and then an increase again after the go cue (at 18 seconds). Because of how fMRI responses work, these changes are delayed by about 6 seconds.

While fMRI gives us relatively good spatial resolution (where things are happening in the brain), it has relatively poor temporal resolution (when things are happening in the brain). However, measuring brain activity using EEG tends to give us the reverse: poor spatial resolution, but good temporal resolution.

Scott Stone and I put together a task to get at the re-recruitment of visual perception using EEG.

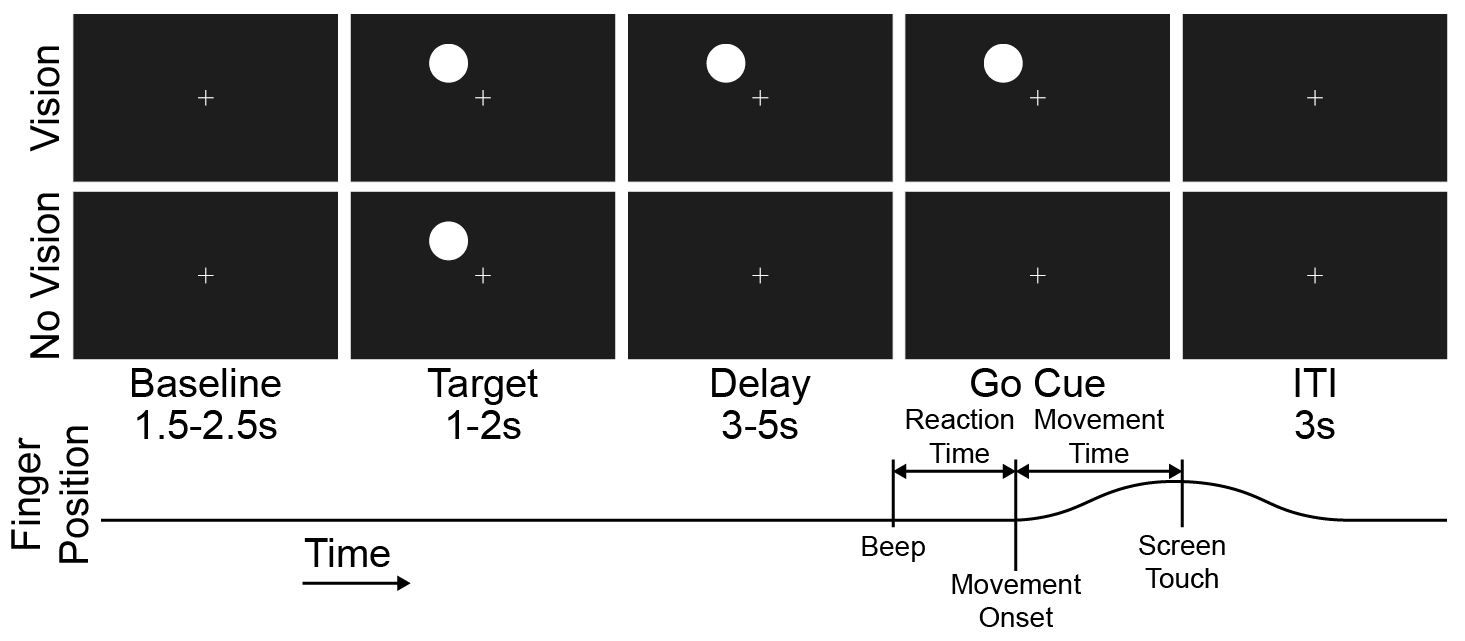

Below is the task timeline. A circle appears in one of the four quadrants on a screen, and after some delay you are cued to reach and touch the circle. On half of the trials the circle stays on the screen (Vision), and on the other half the circle disappears (No Vision).

While people are doing this task, we recorded their hand movements (blue line), eye movements (yellow circle), and brain activity (top left). Below is a video of me doing the task.

Before this project was shelved, we were preparing to look at these data using source modelling and machine learning classifiers.